March 30th, 2026

What Is Inferential Statistics?: Complete Guide with Examples

By Zach Perkel · 20 min read

Understanding what inferential statistics is gives you a way to draw reliable conclusions without surveying an entire population. After applying it across a range of business analyses, here's what's worth knowing before you get started.

What Are Inferential Statistics?

Inferential statistics is a branch of statistics that uses data from a small sample group to draw conclusions about a larger population. Rather than collecting data from every single person or record in a group, which is often impractical, you gather a sample and use it to make informed estimates about the whole.

For example, a business doesn't need to survey every one of its 50,000 customers to understand satisfaction trends. From a well-chosen sample of 500, you can infer insights about the broader group, though there is always some level of uncertainty.

Why is inferential statistics important?

Inferential statistics is important because collecting data from an entire population is often too costly, too slow, or simply not practical. It gives you a way to make informed decisions from a smaller, well-chosen sample instead.

In business, waiting for complete data before making a call isn't always an option. I've seen teams spend weeks gathering numbers they didn't really need, when a well-structured sample could’ve pointed them in the same direction in a fraction of the time. Inferential statistics gives you a framework for acting on partial data while accounting for uncertainty in your conclusions.

When should you use inferential statistics?

You should use inferential statistics when you need to draw conclusions about a large group but can only collect data from part of it. It’s the right approach when surveying an entire population would take too long, cost too much, or isn’t practical.

Here are some common situations where it applies:

You're working with a sample, not a full dataset: If your data only covers part of the population, inferential statistics helps you extend those findings to the broader group, with some uncertainty.

You need to test whether a result is meaningful: If you want to know whether a change in performance, behavior, or outcomes is real or just random, hypothesis testing helps you evaluate whether it’s due to chance.

You're trying to predict or forecast: When you want to estimate how a larger group might behave based on what you've seen in a smaller one, inferential statistics gives you a structured way to make those estimates based on sample data.

You're comparing two or more groups: If you want to know whether two customer segments, product variations, or time periods are different, inferential statistics gives you the tools to test if that difference is statistically meaningful.

Key inferential statistics terms and concepts

Inferential statistics has its own set of terms that come up repeatedly, and understanding them makes the whole process much easier to follow. Here are the ones worth knowing:

Population: The full group you want to draw conclusions about. This could be all of your customers, every transaction in a dataset, or an entire market segment.

Sample: A smaller subset of the population you actually collect data from, ideally chosen to reflect the broader group. I'd say this is the most important concept to get right, because a poorly chosen sample can skew the conclusions that follow.

P-value: A number that shows how likely it is to see results like yours if there were no real effect or difference in the population. A p-value below 0.05 is often treated as statistically significant, which suggests your result is unlikely to be explained by random chance alone.

Confidence interval: A range of values where the real answer for your population is likely to sit. A 95% confidence interval means that if you repeated the study many times, about 95% of those intervals would capture the actual result. I find this one of the more intuitive concepts once you see it applied to real data.

Margin of error: The amount of uncertainty built into your estimate. A smaller sample size tends to produce a wider margin of error, which means less precision in your conclusions, though it also depends on how varied your data is and the confidence level you choose.

Types of inferential statistics with examples

Inferential statistics covers several different methods, and the right one depends on what question you're trying to answer. Here are the main types you're likely to come across:

Hypothesis testing

Hypothesis testing is a way of checking whether a result from your sample data reflects a real pattern in the broader population or could just be due to chance.

Say a marketing team runs a new email campaign and sees a 10% jump in click-through rates. Hypothesis testing helps you check if that lift is real or just random, and the p-value tells you how likely it is that chance alone produced the result. A p-value below 0.05 is a common benchmark, meaning there's less than a 5% probability the finding is random.

Confidence intervals

Confidence intervals give you a range of values where the population result is likely to fall, rather than pinning everything on a single number. You calculate that range using your sample size, the variation in your data, and your chosen confidence level.

For example, a retail brand surveys 400 customers and finds that 68% are satisfied with their service. A 95% confidence interval might estimate the true value to fall between 63% and 73%. I find this framing more useful in practice than a single percentage, because it acknowledges the margin of error built into any sample-based estimate.

Regression analysis

Regression analysis is a technique for modeling the relationship between two or more variables. A SaaS company might use it to explore how increases in onboarding support hours relate to customer retention rates. The analysis won't tell you that one thing causes another, but it can show you how closely those two variables move together, which can still be useful for informing decisions.

T-tests

A t-test compares the averages of two groups to see whether the gap between them is statistically meaningful or within normal variation. If you're running an A/B test on two versions of a landing page, a t-test shows whether the gap in conversion rates between the two versions is statistically meaningful or within the range of normal variation.

Chi-square tests

Chi-square tests help you determine whether two categorical variables are related. For instance, if you want to know whether customer segment and product preference are connected, a chi-square test helps you assess whether that relationship is likely real or due to chance.

ANOVA (analysis of variance)

ANOVA does what a t-test does, but across three or more groups at once. A product team testing three different onboarding flows across separate user groups could use it to find out whether performance differences across those groups are meaningful, instead of running multiple t-tests and increasing the risk of incorrect conclusions.

Tools you can use for inferential statistics

Inferential statistics used to require a statistics degree or a dedicated analyst. But these days, you can use tools like:

Julius: An AI-powered data analysis tool that lets you run inferential analyses by describing what you want to test in everyday language. You upload your dataset and ask your question, then Julius suggests an appropriate method, runs the analysis, and explains the results in plain English.

Python: A popular programming language with libraries like SciPy and StatsModels that cover a wide range of inferential methods. It's highly capable but requires coding knowledge, which makes it a better fit for technical users than most business teams.

Minitab: A dedicated statistical software tool built specifically for inferential analysis. It's widely used in manufacturing and quality control, and its interface is more approachable than R or Python, though it comes with a subscription cost.

SPSS: A statistical software tool used widely in research and academia. It covers a broad range of inferential methods and offers a point-and-click interface, but it still comes with a steeper learning curve and a higher price tag than most options on this list.

SAS: An enterprise-grade analytics platform with deep inferential statistics capabilities. It's a strong option for large organizations with complex analysis needs, though the cost and technical requirements can put it out of reach for smaller teams.

How to run inferential statistics in Julius: Step-by-step

Many analysis tools can run inferential statistics for you once you've set up your data correctly. For this walkthrough, we'll use Julius, which lets you run the analysis by typing your question in plain English.

Here's how:

ANOVA (analysis of variance)

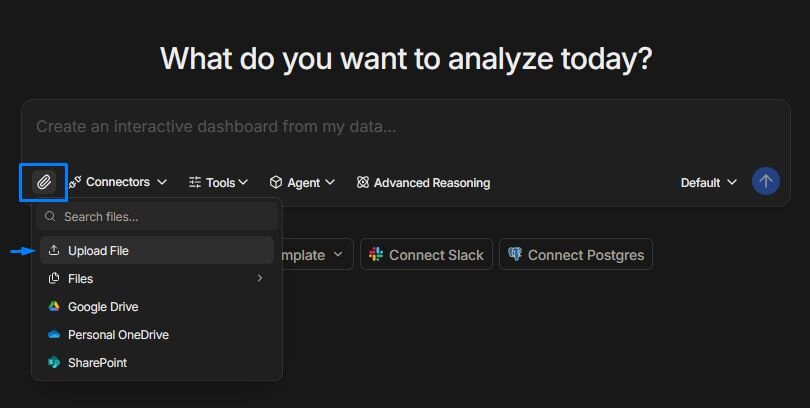

Step 1: Upload your data

Upload your data to Julius by clicking on the paperclip icon in the chat window. If your data lives in a connected source like Postgres, Snowflake, or Google Sheets, you can pull it in from there using the Connectors button.

Step 2: Define what you want to test

Before you run anything, get clear on your question. Are you comparing two groups? Testing whether a result is statistically significant? Looking for a relationship between two variables? The clearer your question, the more useful your output will be.

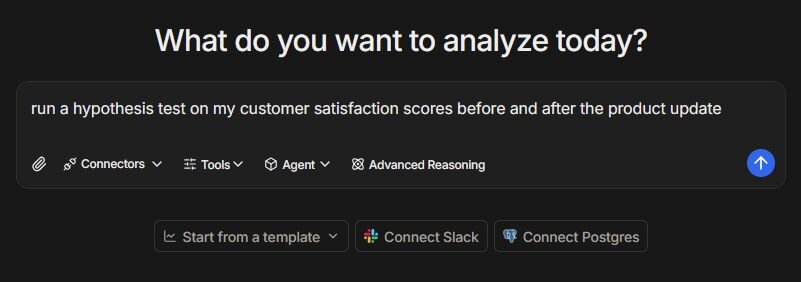

Step 3: Ask Julius to run the analysis, then review your results

Type your question in plain English. Something like "run a hypothesis test on my customer satisfaction scores before and after the product update" or "show me whether these regional sales figures are significantly different from each other" gives Julius enough context to pick the right method and run it.

Julius returns your results with a plain-English summary alongside the numbers. You can also ask follow-up questions to better understand your data.

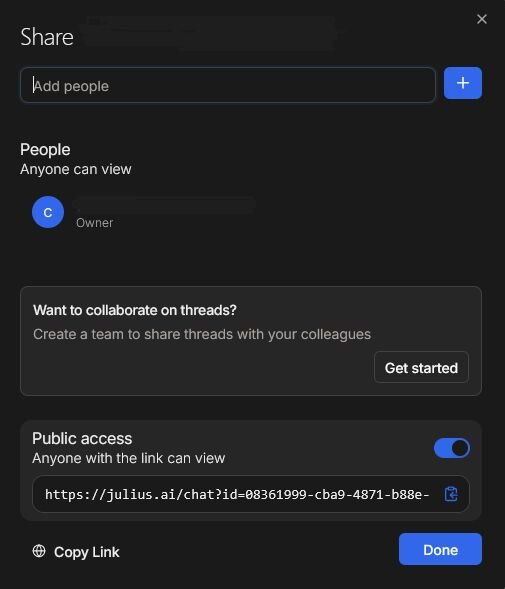

Step 4: Export or share your results

Once you have your results, you can download them as a CSV or PDF, or share them directly from Julius via a link.

Common mistakes and limitations

Inferential statistics is a useful way to draw conclusions from sample data, but it's easy to misuse if you're not careful. Here are some of the most common pitfalls to watch out for:

Using a sample that doesn't represent your population: If your sample skews toward a particular group, age range, or behavior, your conclusions may not hold up across the broader population. In my experience, this is one of the quieter mistakes, because a poorly chosen sample isn't always obvious until you start questioning the results.

Confusing statistical significance with practical significance: A result can be statistically significant without actually mattering in the real world. A p-value below 0.05 suggests the result is unlikely to be due to chance, but it doesn't tell you whether the effect is large enough to act on. I'd always recommend asking "so what does this mean for the business?" before drawing conclusions.

Treating correlation as causation: Inferential statistics can show you that two variables are associated, but that doesn't mean one is causing the other. I've seen this lead teams to act on a connection that turned out to be two metrics reacting to the same underlying variable, not each other.

Using too small a sample: A sample that's too small tends to produce a wide margin of error, which makes your conclusions less reliable. It can also increase the chance of finding a significant result due to random variation. I find that teams often underestimate how much sample size affects the quality of their output.

Running too many tests at once: The more tests you run on the same dataset, the higher the chance that at least one result will appear significant purely by chance. This is worth keeping in mind when exploring data across multiple variables, and it's a mistake I've seen catch even experienced analysts off guard.

Ignoring the assumptions behind each test: Most inferential tests come with assumptions about your data, such as how it's distributed or whether the groups you're comparing are independent. Running a test without checking those assumptions can produce misleading results, and it's one of those steps that's easy to skip when you're in a hurry.

Inferential vs. descriptive statistics

Descriptive statistics summarize the data you already have. They describe what happened in your dataset, nothing more and nothing less. If you calculate the average order value across last month's transactions or count how many customers churned in Q3, you're using descriptive statistics. The conclusions stay within the boundaries of your data.

Inferential statistics go a step further. Rather than just describing what's in your sample, you use it to draw conclusions about a larger group based on sample data. I find the distinction becomes clearest when you ask yourself whether you're trying to describe your data or make an estimate beyond it.

Here's a simple way to think about the difference:

| Descriptive statistics | Inferential statistics |

|---|---|---|

Purpose | Summarizes existing data | Draws conclusions about a larger population |

Scope | Limited to your dataset | Extends beyond your sample |

Output | Averages, totals, and distributions | Estimates, probabilities, and significance tests |

Certainty | High, based on actual data | Lower, because it involves estimation and uncertainty |

Example | Average revenue per customer last quarter | Predicting average revenue across all future customers |

Want to run inferential statistics without the math? Try Julius

Inferential statistics can tell you whether your results are meaningful, but running the analysis manually takes time and leaves room for error. With Julius, you can upload your dataset, type your question in plain English, and get your results along with a clear interpretation without writing code.

Here’s how Julius helps:

Direct connections: Link databases like PostgreSQL, Snowflake, and BigQuery, or integrate with Google Ads and other business tools. You can also upload CSV or Excel files. Your analysis can reflect live data, so you’re less likely to rely on outdated spreadsheets.

Hypothesis testing without coding: Ask whether your sample average differs from a target value and review the resulting t-value and p-value without writing statistical formulas.

Follow-up analysis in the same workspace: After running a t-test, you can continue asking questions about the same dataset to explore trends, segments, or outliers.

Repeatable Notebooks: Save an analysis as a notebook and run it again with fresh data whenever you need. You can also schedule notebooks to send updated results to email or Slack.

Smarter over time: Julius includes a Learning Sub Agent, an AI that adapts to your database structure over time. It learns table relationships and column meanings as you work with your data, which can help improve result accuracy.

Built-in visualization: Get histograms, box plots, and bar charts on the spot instead of jumping into another tool to build them.

One-click sharing: Turn an analysis into a PDF report you can share without extra formatting.

Ready to analyze your data using everyday language? Try Julius for free today.

Frequently asked questions

What is a p-value in inferential statistics?

A p-value is a number that shows how likely your results are if there were no real effect or difference between the groups or variables you’re testing. A p-value below 0.05 is often used as a threshold for statistical significance, which suggests the result is unlikely to be due to chance under that assumption. Lower p-values provide stronger evidence against the idea that there is no real effect.

What do you need for inferential statistics to produce reliable results?

For inferential statistics to produce reliable results, your sample should be randomly selected, and your data points should be independent of each other. Many common tests also assume a roughly normal distribution in your data, though this tends to matter less with larger samples.

Can you use inferential statistics in Excel?

Yes, Excel can run basic inferential statistics tests, including t-tests, chi-square tests, and regression analysis using its built-in Data Analysis Toolpak. It works well for straightforward analyses on smaller datasets, but it can become difficult to manage as your data grows or your analysis gets more complex. Dedicated analysis tools tend to handle larger datasets and more advanced methods more effectively.