April 22th, 2026

17 Best-Rated Predictive Analytics Tools for Data Professionals

By Zach Perkel · 32 min read

17 Best-rated predictive analytics tools for data professionals

💻 Tool | 🎯 Best for | 🔥 Starting price (billed annually) | ⚡ Strengths |

|---|---|---|---|

Enterprise AutoML and model deployment | AutoML, model governance, and outcome tracking | ||

Governed, cloud-native data science | Distributed processing, open-source integration, and audit trails | ||

Fast, open-source AutoML | AutoML, Python and R support, and flexible deployment | ||

Fast data analysis and light forecasting without the model-building overhead | Natural language analysis, web data search, live financial data for 17,000+ companies, and scheduled reports | ||

No-code data prep and blending | Drag-and-drop workflows, geospatial tools, and Python and R integration | ||

Large-scale ML pipelines and data engineering | Unified lakehouse, collaborative notebooks, and Spark-based processing | ||

Visual predictive modeling | $529/month, billed monthly | Visual modeling interface, time series forecasting, and statistical depth | |

Interactive dashboards with predictive visualization | $15/user/month; A Creator license is also required at $75/user/month | Advanced data visualization, broad connector support, and AI-assisted analytics | |

Associative data exploration | $300/month, includes 10 users | Associative analytics, natural language queries, and embedded BI | |

End-to-end ML within AWS | End-to-end ML lifecycle, deep AWS integration, and MLOps tooling | ||

Planning and forecasting for SAP users | SAP-native integration, scenario planning, and embedded ML | ||

Visual, code-optional ML workflows | AutoML, drag-and-drop workflow builder, and SAS code compatibility | ||

Scalable enterprise BI and reporting | $13/user/month, billed monthly | Enterprise reporting, AI-assisted dashboards, and broad data connectivity | |

AI-powered enterprise reporting | Automated data prep, decision trees, and AI-assisted exploration | ||

Building and deploying ML within Microsoft | MLOps, Azure integration, and code-first and no-code workflows | ||

Governed, SQL-based analytics | LookML modeling, Google Cloud integration, and consistent metric definitions | ||

Free, visual predictive modeling | $19/month, billed monthly | Open-source platform, node-based workflows, and deep learning integrations |

How I researched and tested these predictive analytics tools

I tested the tools I could access directly by uploading datasets, running queries, and building basic models to see where each one delivers and where it falls short. For tools I couldn't access directly, I went through demos, documentation, and verified user reviews to understand how they perform in practice.

Here's what I considered:

Ease of use: Whether a non-coder or business user can get up and running without a steep learning curve.

Modeling capabilities: What kinds of forecasts and predictive models you can build, and how much technical knowledge they may require.

Data connectivity: How well the tool connects to your existing data sources, databases, and cloud platforms.

Pricing vs. value: What you get at each tier and whether the features justify the cost.

Documentation and support: How clear the setup guides are and how easy it is to find help when you need it.

Some of these tools are built for data scientists with deep technical skills, and some are built for business users who just want answers fast. I've tried to be clear about who each tool is really for, so you can make the right call for your team.

1. DataRobot: Best for enterprise AutoML and model deployment

What it does: DataRobot is an enterprise AI platform that automates the process of building, deploying, and monitoring machine learning (ML) models across your organization.

Best for: Enterprise data teams that need to build, deploy, and govern predictive models at scale without relying on a large data science team.

Key features

AutoML: Automatically run multiple machine learning algorithms against your dataset and rank the results by performance metrics.

MLOps monitoring: Track deployed models over time and flag performance degradation or data drift after deployment.

AI governance: Set access controls, audit trails, and compliance documentation across all models built within the platform.

Pros and cons

✅ Pros | ❌ Cons |

|---|---|

Automates model selection and comparison, which can reduce the need for deep data science expertise | Initial infrastructure setup can require significant IT involvement |

Built-in governance tools can make it easier to manage models in regulated industries | The interface can feel overwhelming for users who only need basic forecasting |

Ties model performance back to measurable business outcomes | |

What users say

Pricing

Bottom line

2. SAS Viya: Best for governed, cloud-native data science

What it does: SAS Viya is a cloud-based analytics platform that supports data preparation, machine learning, and model deployment across SAS, Python, and R environments.

Best for: Data science teams in regulated industries that need auditable, reproducible workflows and open-source language support in one governed environment.

Key features

Distributed processing (CAS): Run data queries and model training jobs across a distributed in-memory engine to handle larger analytical workloads.

Open-source integration: Build and run models in SAS, Python, and R within a single environment without switching between tools.

Model governance: Track model lineage, version history, and audit trails across all projects built within the platform.

Pros and cons

✅ Pros | ❌ Cons |

|---|---|

Open-source integration can allow teams to work across SAS, Python, and R without switching platforms | The interface can take time to learn |

Audit trail features can make compliance reporting more manageable in regulated industries | Non-technical users may find the platform difficult to navigate without data science experience |

Distributed processing can handle larger analytical workloads without moving data to a separate environment | |

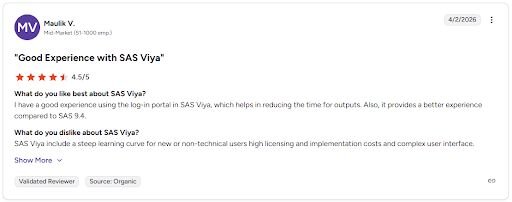

What users say

Con: "SAS Viya include[s] a steep learning curve for new or non-technical users. high licensing and implementation costs and complex user interface." - Maulik V., G2

Pricing

SAS Viya offers custom pricing.

Bottom line

3. H2O.ai: Best for fast, open-source AutoML

What it does: H2O.ai is an open-source machine learning platform that automates model building and supports a wide range of algorithms, including deep learning, gradient boosting, and random forests.

Best for: Data science teams that need fast, flexible AutoML with deployment options across cloud and air-gapped environments.

Key features

AutoML: Run multiple algorithms automatically against your dataset and return a ranked leaderboard of model performance results.

H2O Flow: Build, run, and inspect machine learning workflows through a browser-based notebook interface without writing code.

Flexible deployment: Deploy trained models across cloud, on-premise, or air-gapped environments depending on your security requirements.

Pros and cons

✅ Pros | ❌ Cons |

|---|---|

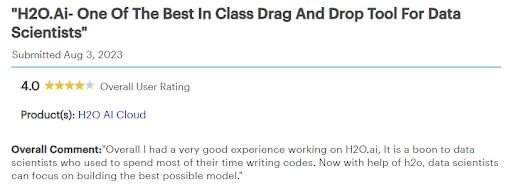

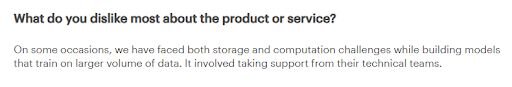

Open-source foundation can make it accessible for teams that need a capable ML platform without enterprise licensing costs | Storage and computation can become a bottleneck when training models on larger datasets |

AutoML leaderboard can make it easier to compare model performance without manually running each algorithm | Documentation can be inconsistent, particularly for Python integration |

Flexible deployment options can suit teams with strict data security or air-gap requirements | |

What users say

Pricing

Bottom line

4. Julius: Best for fast data analysis and light forecasting without the model-building overhead

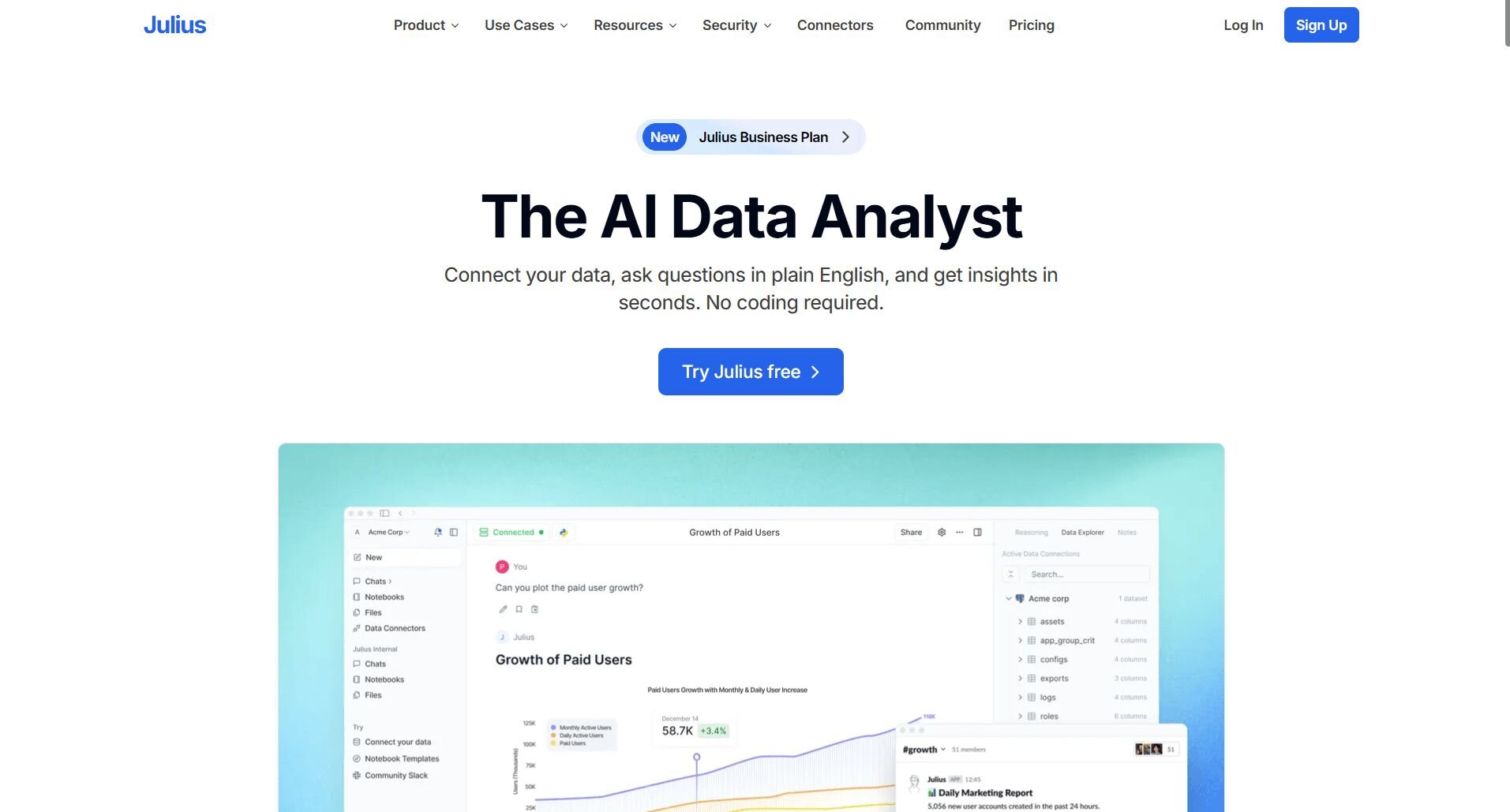

What it does: Julius is an AI-powered data analysis tool that lets you ask questions about your data in plain English and get charts, tables, and reports back without writing code.

Best for: Business users and analysts who want to query connected data sources or pull public and financial data from a prompt, without the overhead of building and maintaining models.

We built Julius for data professionals who need to move fast on analysis and light forecasting without setting up a full modeling pipeline. You can connect sources like Postgres, Snowflake, and BigQuery for live data analysis. For public and financial research, Julius can pull data for over 17,000 companies directly through the Financial Datasets integration, so many analyses don't require an upload at all.

As your team runs more queries on the same connected data, Julius builds context around your database structure and column relationships. Follow-up questions need less setup over time, and results can get more precise.

Key features

Data connectors: Connect to databases and cloud data sources, including Postgres, Snowflake, and BigQuery, so your analysis runs on live data rather than static exports.

Data search: Search for public data or pull live financial data for over 17,000 companies through the Financial Datasets integration, without uploading a file yourself.

Natural language queries: Ask questions about your data the way you'd ask a colleague and get a chart, table, or analysis back without writing SQL or Python.

Repeatable Notebooks: Save multi-step analysis workflows, schedule them, and get results delivered to email or Slack without rebuilding the report each time.

Adaptive data understanding: Julius builds context around your database structure over time, reducing the setup needed as your team runs more queries on the same connected source.

Pros and cons

✅ Pros | ❌ Cons |

|---|---|

Connects to live databases and can source public or financial data directly, so you can start analysis with or without your own data | Results can vary if your source data has inconsistent formatting or naming |

Notebooks let you schedule and repeat reports without rebuilding them each time | The adaptive data understanding builds over time, so early queries on a new connection may need more context |

Analysts can run queries and explore data independently without SQL knowledge, freeing up data science teams for deeper modeling work. | |

What users say

Pricing

💰 Price billed annually | 💰 Price billed monthly | |

|---|---|---|

Free | $0 | $0 |

Pro | $16/month | $20/month |

Business | $33/month | $40/month |

Growth | $375/month | $450/month |

Bottom line

5. Alteryx: Best for no-code data prep and blending

What it does: Alteryx is a data analytics platform that lets you clean, blend, and prep data from multiple sources through a drag-and-drop workflow builder without writing code.

Best for: Analysts and operations teams that need to prep and blend data from multiple sources before analysis or modeling, without relying on SQL or Python.

Key features

Drag-and-drop workflow builder: Build end-to-end data preparation and blending workflows by connecting pre-built tools in a visual canvas.

Geospatial analysis: Run location-based analysis and spatial joins directly within a workflow without a separate GIS tool.

Python and R integration: Add custom Python or R scripts into your workflow for steps that go beyond the built-in tool library.

Pros and cons

✅ Pros | ❌ Cons |

|---|---|

Drag-and-drop interface can make data prep accessible to analysts without SQL or coding experience | Performance can slow down on very large or complex workflows |

Workflow automation can reduce the time spent on repetitive data preparation tasks | Moving to the cloud-based version requires loading data into the cloud, which may raise security concerns for some teams |

Geospatial tools are built in, so location-based analysis doesn't need a separate platform | |

What users say

Pro: "I like Alteryx's intuitive drag and drop interface, which makes building workflows easy. It drastically reduces the time spent on data preparation and blending. I can automate repetitive tasks without writing code, and it handles large datasets smoothly." - Venkata M., G2

Pricing

Bottom line

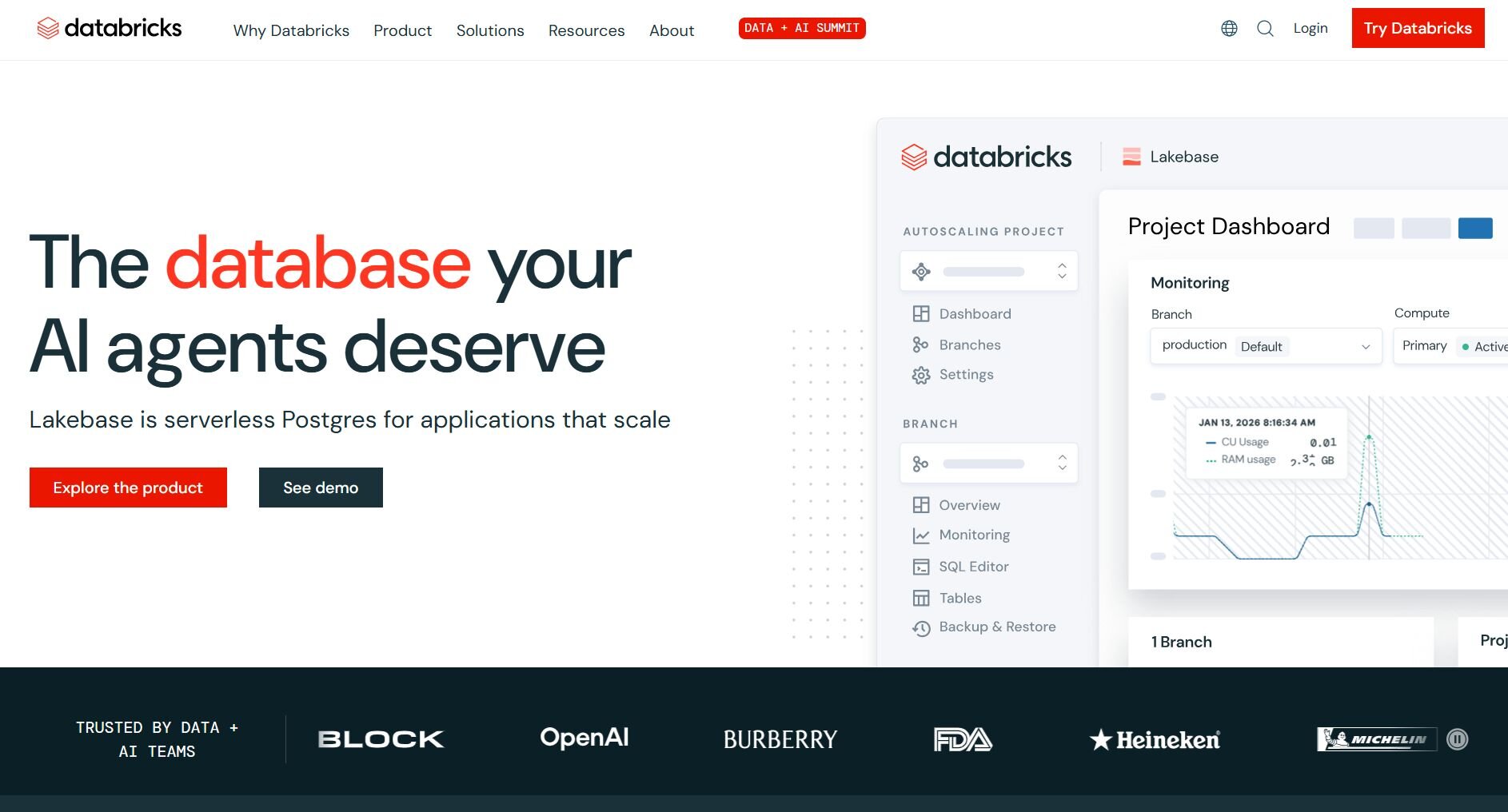

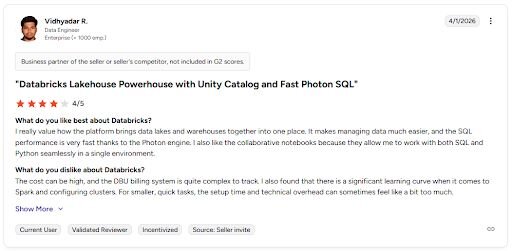

6. Databricks: Best for large-scale ML pipelines and data engineering

What it does: Databricks is a unified data and AI platform that combines data engineering, machine learning, and analytics workloads in a single lakehouse environment.

Best for: Data engineering and data science teams that need to build, manage, and deploy large-scale ML pipelines on top of a unified data infrastructure.

Key features

Unified lakehouse: Combine data lake storage and data warehouse capabilities in one environment to run analytics and ML on a single copy of data.

Collaborative notebooks: Build, run, and share SQL, Python, and R notebooks in a shared workspace with version control and commenting.

AutoML: Run automated machine learning experiments directly within the platform to generate baseline models from your data.

Pros and cons

✅ Pros | ❌ Cons |

|---|---|

Unified lakehouse architecture can reduce the need to move data between separate storage and compute environments | DBU-based billing can be difficult to predict and monitor without dedicated cost management processes |

Collaborative notebooks can make it easier for data engineers and scientists to work in the same environment | Steep learning curve for users without cloud engineering experience |

Built-in AutoML can give data science teams a faster starting point for model development | |

What users say

Pricing

Bottom line

Special mentions

The tools below span everything from open-source workflow builders to enterprise BI platforms with predictive capabilities built in.

Here are 11 more predictive analytics tools worth considering:

IBM SPSS Modeler: IBM SPSS Modeler is a visual predictive modeling platform with a drag-and-drop workflow interface that covers a wide range of statistical and machine learning techniques. I found it strong for teams that need statistical depth without writing much code, though the interface can feel dated compared to newer platforms.

Tableau: Tableau is a data visualization platform that includes predictive features like forecasting and trend analysis. It works well for communicating findings through polished dashboards, but teams that need to build and deploy models from scratch may find its modeling capabilities limited.

Qlik Sense: Qlik Sense is a self-service analytics platform built around an associative data engine that lets you explore relationships across your data freely. It can reveal patterns that more structured query tools might miss. However, it takes time to get comfortable with how the associative model works.

Amazon SageMaker: Amazon SageMaker is a cloud-based platform for building, training, and deploying machine learning models within the Amazon Web Services (AWS) ecosystem. It covers the full ML lifecycle well, but teams without cloud engineering experience may struggle to configure and manage the infrastructure it runs on.

SAP Analytics Cloud: SAP Analytics Cloud combines BI, planning, and predictive analytics in one platform. It also integrates naturally with other SAP products. It can be a practical option for organizations already running SAP ERP, though it may feel less flexible outside that ecosystem.

RapidMiner (Altair): RapidMiner is a data science platform that uses a visual, node-based workflow builder to help teams build predictive models without heavy coding. It supports AutoML and integrates with Python, R, and SAS. The downside is that the visual workflow approach can feel limiting for teams that need more custom model control.

MicroStrategy: MicroStrategy is an enterprise BI platform that includes predictive analytics features alongside its core reporting and dashboard capabilities. It can work well for teams that need accessible dashboards on the go, but model-building depth isn't really its strong suit.

IBM Cognos Analytics: IBM Cognos Analytics is an AI-powered BI platform that covers reporting, dashboards, and predictive forecasting in one place. It works best within larger IBM-heavy environments, and teams outside that ecosystem may need extra setup to connect it to their existing tools.

Microsoft Azure ML: Microsoft Azure ML is a cloud-based machine learning platform that supports both code-first workflows and a drag-and-drop designer for mixed-skill teams. It covers the full ML lifecycle well, but teams with simpler modeling needs may find the platform more complex than their use case calls for.

Google Looker: Google Looker is a BI platform that uses LookML to define metrics at the source and can surface predictive insights through Vertex AI. It fits well into Google Cloud environments. However, building and maintaining LookML models does require some technical investment upfront.

KNIME: KNIME is an open-source analytics platform that lets you build predictive workflows through a visual, node-based interface without writing code. It supports integrations with Python, R, and H2O.ai AutoML, and works well for solo analysts or small teams. Larger teams that need collaboration and deployment features will likely need the enterprise version.

Which predictive analytics tool should you choose?

The right predictive analytics tool depends on what kind of modeling your team needs and how much technical depth you can realistically work with.

Choose DataRobot if you:

Want to automate the model-building process without relying heavily on data scientists

Need a platform that ties predictive models back to measurable business outcomes

Work in an enterprise environment that requires model governance and monitoring

Choose SAS Viya if you:

Need a governed, auditable analytics environment where compliance matters

Already work with SAS and want to stay within a familiar ecosystem

Have a technical team that can work across SAS, Python, and R

Choose H2O.ai if you:

Want a fast, open-source AutoML platform that doesn't require heavy infrastructure

Need flexible deployment options, including air-gapped or cloud environments

Have data scientists comfortable working with Python or R

Choose Julius if you:

Want to ask questions about your data in plain English without building models

Need to analyze connected data sources like Postgres, Snowflake, or BigQuery

Want to start from a business question and pull public or financial data without uploading a file first

Choose Alteryx if you:

Need to clean, blend, and prep data before it's ready for modeling

Want a no-code workflow builder that doesn't require SQL or Python

Work with geospatial data or data from multiple disconnected sources

Choose Databricks if you:

Have a team of data engineers and scientists who need a unified environment for pipelines and ML

Work with large-scale data that needs to be processed before modeling

Are already invested in cloud infrastructure and want a platform that scales with it

Final verdict

The best-rated predictive analytics tools for data professionals on this list range from no-code AutoML platforms to full-scale ML engineering environments. DataRobot and H2O.ai work well for teams that need automated model building, and SAS Viya suits organizations where compliance and auditability matter.

If your priority is exploring and acting on data without a modeling background, Julius is worth trying first.

Here’s how Julius helps:

Data search: Type your question, and Julius can search for relevant public data or pull live financial market data for over 17,000 companies through its Financial Datasets integration, so you can start your analysis before you have a dataset ready.

Direct connections: Link databases like PostgreSQL, Snowflake, and BigQuery, or integrate with Google Ads and other business tools. You can also upload CSV or Excel files. Your analysis can reflect live data, so you’re less likely to rely on outdated spreadsheets.

Repeatable Notebooks: Save an analysis as a notebook and run it again with fresh data whenever you need. You can also schedule notebooks to send updated results to email or Slack.

Smarter over time: Julius includes a Learning Sub Agent, an AI that adapts to your database structure over time. It learns table relationships and column meanings as you work with your data, which can help improve result accuracy.

Quick single-metric checks: Ask for an average, spread, or distribution, and Julius shows you the numbers with an easy-to-read chart.

Built-in visualization: Get histograms, box plots, and bar charts on the spot instead of jumping into another tool to build them.

One-click sharing: Turn an analysis into a PDF report you can share without extra formatting.

For data professionals who want quick answers from connected or public data without building models, Julius is worth trying. You can bring your own data or start with a question and have Julius find and compile the data you need.